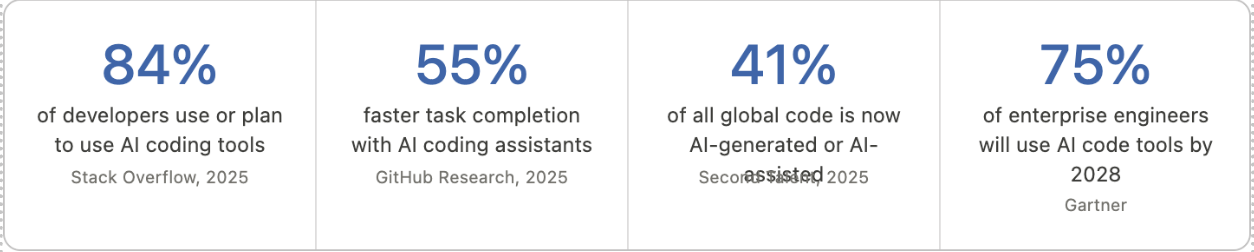

The debate has moved past "AI or developers?", it is now "developers who use AI versus those who don't." GitHub Copilot now writes nearly half of a developer code on GitHub, with some Java developers reporting rates as high as 61%. Nearly 80% of new developers begin using AI coding assistants within their first week. This is not a future scenario, but it is happening right now.

But traditional programming hasn't disappeared. Far from it. The most effective enterprise engineering organizations in 2025 leverage the stability of rule-based code alongside the adaptive power of AI coding agents. The question is not which is better. It is how to architect workflows that use both where each is strongest.

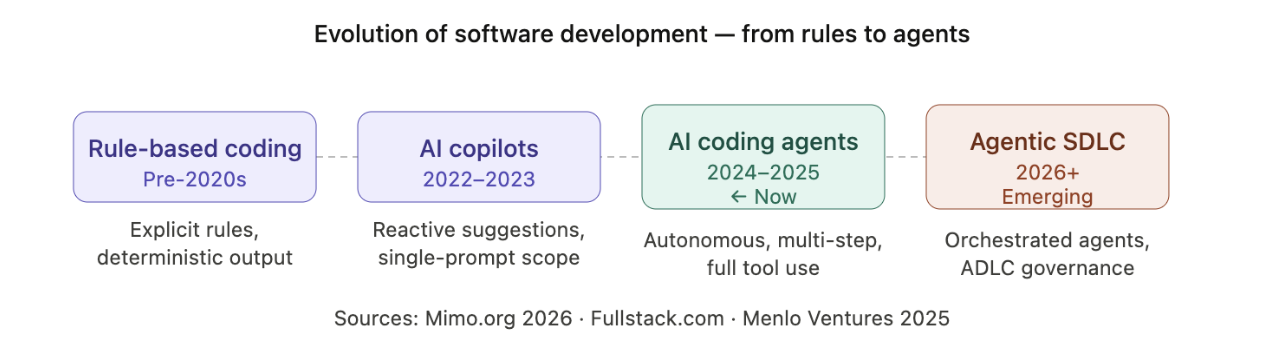

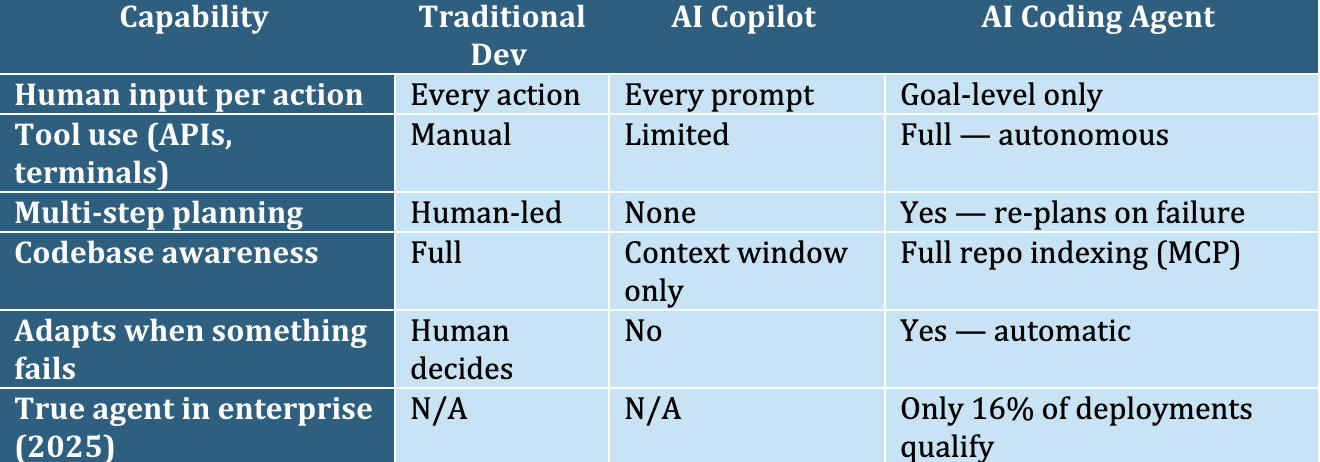

Traditional software development relies on explicit, hand-coded rules where every condition is defined by a human developer, producing deterministic, predictable output. AI coding agents are probabilistic systems that learn patterns from large code datasets, generate code autonomously, execute multi-step tasks across tools, and adapt without requiring manual rule rewrites for every new scenario.

Consider the example Mimo uses spam filters. A traditional approach means listing banned words "if email contains 'win money', mark as spam." Every new pattern requires a new rule authored by a human. An AI-based approach shows the system thousands of spams and legitimate examples. It learns to recognize patterns on its own and adapt as spammers evolve, without anyone writing a new rule.

This distinction, like explicit rules versus learned patterns, underpins everything that follows. Neither approach is universally superior. The enterprises that win are those that learn precisely when to deploy each.

Below is the evolution from rule-based coding all the way to the Agentic SDLC era now emerging:

Here is the full head-to-head comparison across nine dimensions every enterprise team should evaluate:

The "Dory Problem": Generative AI lacks persistent institutional memory of your codebase's history, architectural intent, and past failures. It can generate syntactically correct code at speed but struggles to troubleshoot complex system-level issues, learn from past mistakes, or identify race conditions in concurrent systems. The institutional knowledge held by experienced engineers remains irreplaceable.

By 2025, 82% of developers use AI coding tools daily or weekly (Qodo). The real productivity gain comes from where AI redirects developer attention. AI now handles approximately 40% of the time developers previously spent on boilerplate code, the repetitive, mechanical work that fills screens but requires little creative thought. This frees mental energy for architecture, problem-solving, and high-level strategy.

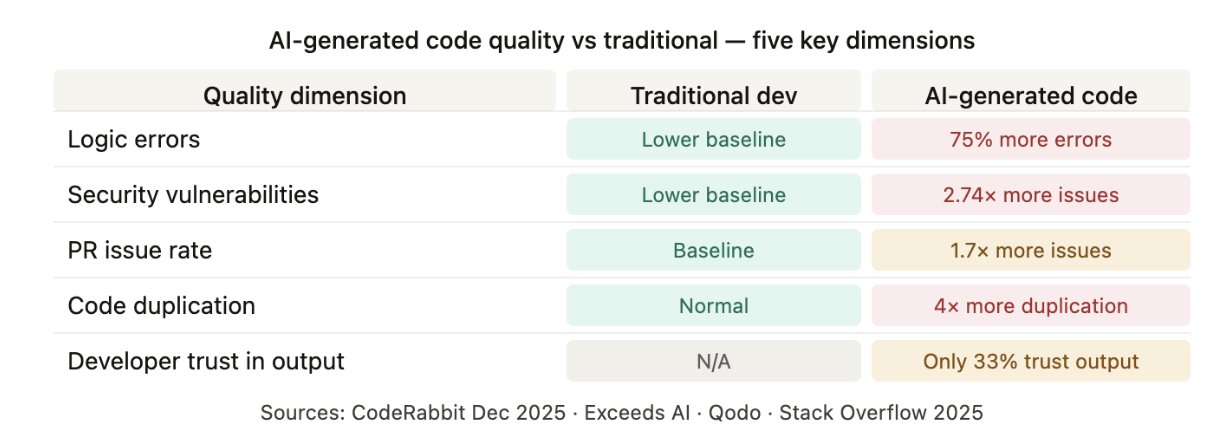

But here is the number nobody advertises: the verification tax. Nearly two-thirds of development teams (64%) report that manually verifying AI-generated code takes as long as writing the code from scratch (TechReviewer, 2025). Speed gains are real, but they only materialize when review workflows are modernized simultaneously.

The costliest enterprise mistake is applying AI coding agents uniformly across all development tasks. Knowing the boundary between strengths and failure modes is what separates productive AI adoption from expensive technical debt.

AI coding agents now touch every stage of the SDLC from design-to-code prototyping through to autonomous incident remediation. The teams winning in 2025 are not adopting AI at one stage. They are integrating it across the entire pipeline, so speed gains compound rather than cancel out.

GitHub Copilot suggests your next function is impressive. An AI agent that receives a goal "refactor the authentication module to JWT, write full test coverage, and open a PR with a plain-English summary" and completes it without further human input is transformative. That is the categorical difference between a copilot and a coding agent.

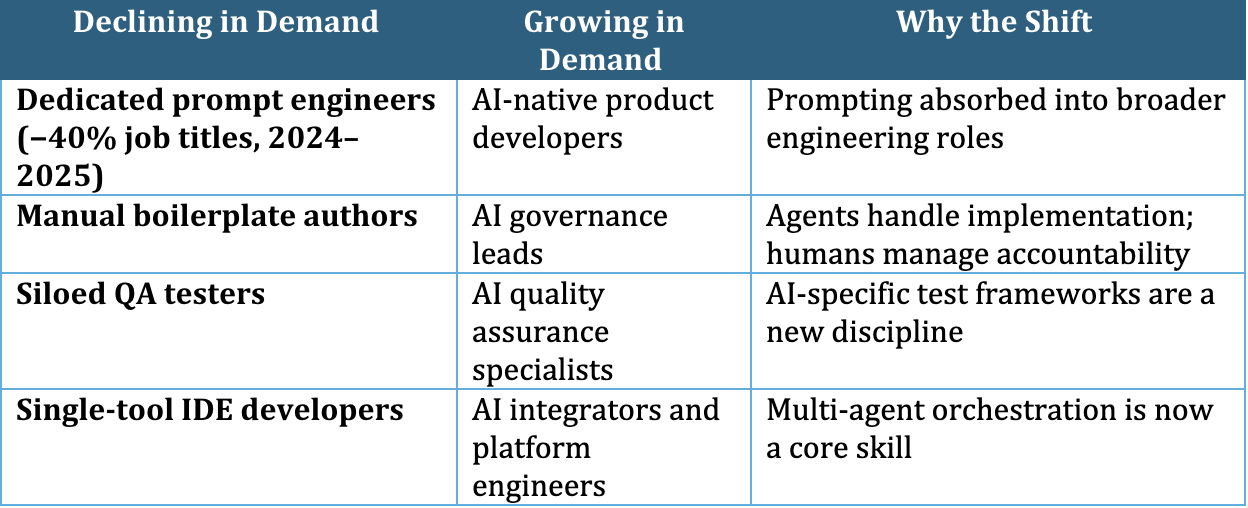

"The human developer's role is evolving from writing low-level code to becoming an orchestrator someone who instructs agents, iterates on complex design challenges, and manages the collective output of agent teams." Mimo.org, AI vs Traditional Programming Report, 2026

Reality check: Menlo Ventures' 2025 State of Generative AI report found that only 16% of enterprise and 27% of startup deployments qualify as true agentic systems, where an AI plans actions, observes feedback, and adapts. Most production deployments labelled "agents" are sophisticated fixed-sequence workflows. That is still valuable, but setting the right expectations before building internal business cases is critical.

The speed narrative around AI coding agents is compelling. The quality narrative is more complicated, and enterprises that ignore it pay a compounding cost in technical debt, security incidents, and failed compliance audits.

These numbers do not argue against AI coding agents. They argue for a structured, layered review model. Teams that implement this where AI agents handle first-pass filtering and human engineers focus on architectural alignment and business logic report performing three times more code reviews with the same engineering headcount, while quality improves alongside velocity.

Critical data point: Exceeds AI's 2025 longitudinal study found that 96% of developers express doubts about AI-generated code reliability. AI-touched code shows higher incident rates over time revealing technical debt that standard DORA metrics cannot detect, because those metrics cannot separate AI-authored code from human-written code. Enterprise teams need longitudinal code tracking, not just throughput dashboards.

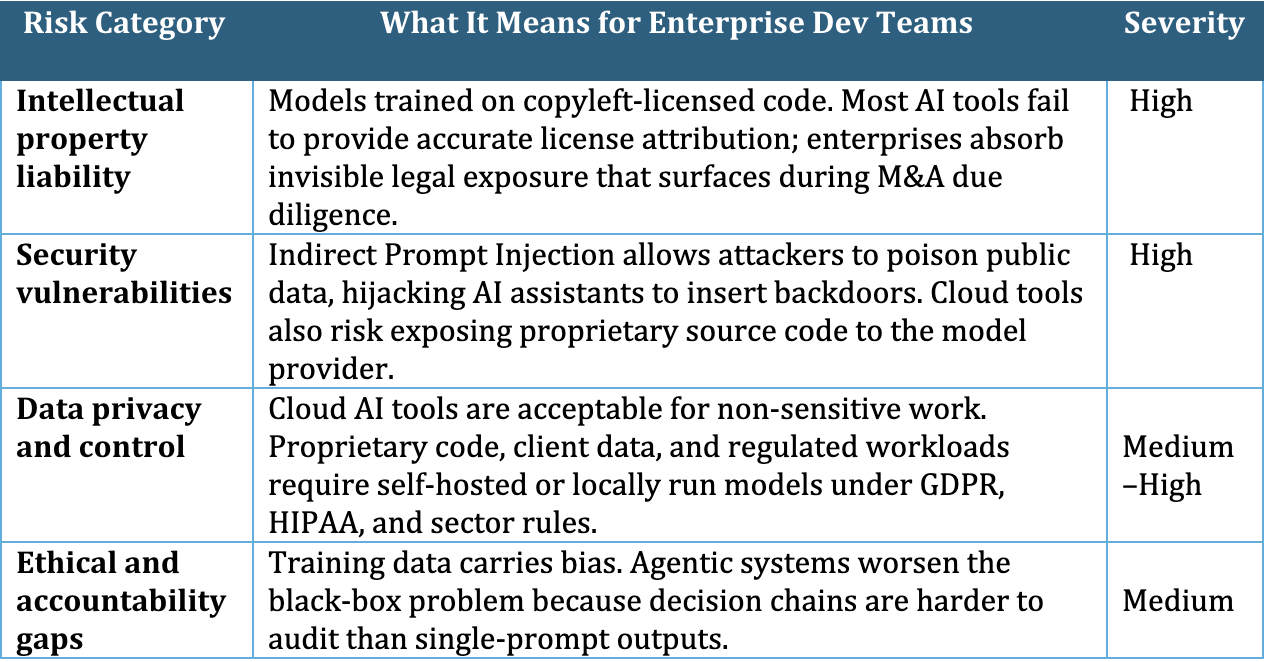

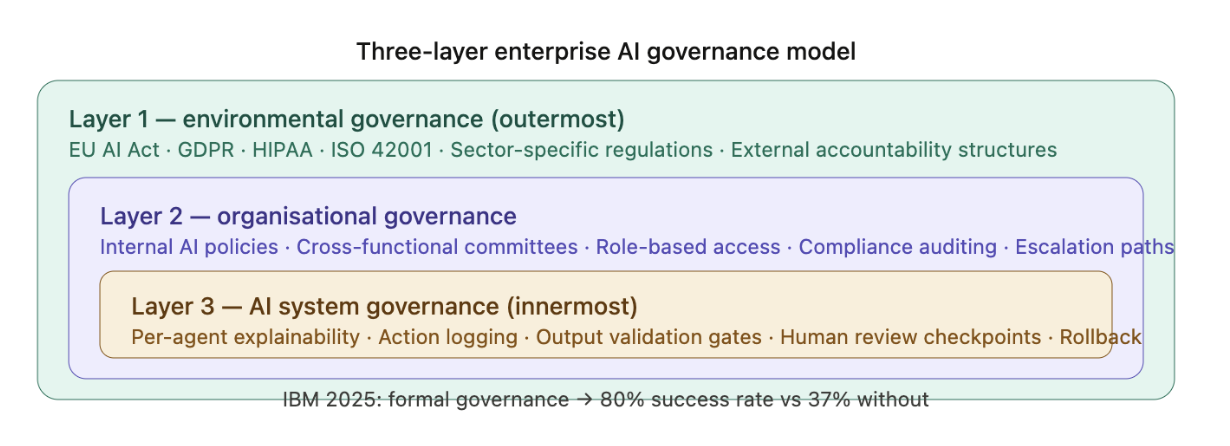

As AI coding agents move from developer tooling to enterprise infrastructure, the challenge shifts from raw speed to managing legal, security, and ethical risk. IBM's 2025 research makes the stakes clear: organizations with formal AI governance strategies succeed at an 80% rate. Those without formal strategies succeed only 37% of the time.

The most successful enterprise engineering organizations in 2025 are not choosing between AI coding agents and traditional development. They are building structured hybrid workflows where each approach does what it does best. 59% of developers already run three or more AI coding tools in parallel, mixing Copilot, Claude Code, Cursor, and CodeRabbit for different tasks within the same workflow.

AI-savvy developers now command entry-level salaries of $90,000–$130,000 compared to $65,000–$85,000 for traditional roles, a 30–50% premium reflecting the skills required to direct, validate, and govern AI coding systems.

How Hexaview Technologies Can Help?

Hexaview Technologies is a Tier-1 systems integrator and managed agentic services provider trusted by 100+ enterprises across wealth management, fintech, healthcare, and regulated industries. We do not build pilots. We build production-grade AI systems that deliver measurable, auditable ROI.

The conflict between AI coding agents and traditional development is a false binary. The future of enterprise software engineering is not one or the other, it is learning to conduct both as complementary instruments, each deployed where it adds the most value.

Traditional programming is not going away. The architectural thinking, the institutional memory, the debugging of complex concurrent systems, the precise rule-based logic powering financial transactions and compliance workflows — these remain deeply human. What AI coding agents are doing is reclaiming the hours engineers spend on work that does not require human judgment, so those engineers can spend more time on work that does.

By 2028, 75% of enterprise software engineers will use AI coding tools as a standard part of their workflow (Gartner). The window for building genuine competitive advantage through early adoption, developing the governance frameworks, review workflows, team skills, and institutional knowledge of where AI adds value in your specific engineering context, is measured in months, not years.

Not sure where AI coding agents fit in your development stack? Hexaview's enterprise AI team has helped organisations across financial services, healthcare, and technology design governance-first AI development workflows that deliver measurable results. We start with your codebase, your constraints, and your compliance requirements — not a generic playbook.

Talk to a Hexaview expert today →

1. What is the difference between AI coding agents and traditional development?

Traditional development follows fixed rules written by developers, while AI coding agents learn from data and complete tasks autonomously based on goals.

2. Are AI coding agents replacing developers?

No. They are changing the role of developers by shifting focus toward design, review, and decision making rather than repetitive coding.

3. How much faster is AI driven development?

AI tools can significantly improve speed, with faster task completion, quicker code reviews, and higher productivity across teams.

4. What are the main quality risks of AI generated code?

AI generated code can have more errors, security issues, and duplication, which makes proper review and governance essential.

5. Should enterprises choose AI agents or traditional development?

A hybrid approach works best, where AI handles repetitive tasks and humans focus on critical logic and decision making.