From reactive copilots to fully autonomous agents, how agentic AI is reshaping the way enterprise teams plan, build, test, and deploy software in 2025 and beyond.

Agentic AI refers to autonomous AI systems that understand goals, devise multi-step plans, execute actions across tools and systems, adapt when things go wrong, and complete complex workflows; all without requiring step-by-step human instruction at every stage.

For the past two years, the enterprise AI conversation was dominated by generative AI copilots, tools like GitHub Copilot, Microsoft Copilot, and ChatGPT for business. These were genuinely transformative, but they shared a fundamental limitation: they waited. Every useful output required a human prompt. Every step needed a human decision.

Agentic AI breaks that loop entirely. An agentic system doesn't wait to be asked what to do next, it reasons about a goal, plans the sequence of actions required, executes those actions using real tools (APIs, terminals, browsers, databases), monitors outcomes, and re-plans when something fails. The difference isn't incremental. It's categorical.

IBM's 2025 research puts it plainly: 89% of CIOs now consider agent-based AI a strategic priority, not an experiment. The enterprise has moved past "what is this?" to "how fast can we deploy it?"

Watch out for "Agent Washing"

Gartner warns of a growing practice of vendors rebranding existing RPA tools, chatbots, and simple AI assistants as "agents" without genuine autonomous planning capabilities. Before evaluating any platform, verify it performs multi-step reasoning, real tool use, and adaptive re-planning, the three hallmarks of genuine agentic AI.

Understanding where agentic AI sits relative to prior AI generations is essential for enterprise planning:

"AI agents will evolve rapidly, progressing from task-specific agents to agentic ecosystems, transforming enterprise applications from productivity tools into platforms enabling seamless autonomous collaboration." — Anushree Verma, Senior Director Analyst, Gartner

The agentic AI market isn't a projection, but it's an acceleration already in progress. The global AI agents market reached $7.92 billion in 2025, and analysts project it will hit $236 billion by 2034, representing a 38× expansion in nine years. For enterprise-focused deployments specifically, the segment is growing at a 46.2% CAGR, from $2.58 billion in 2024 to $24.5 billion by 2030.

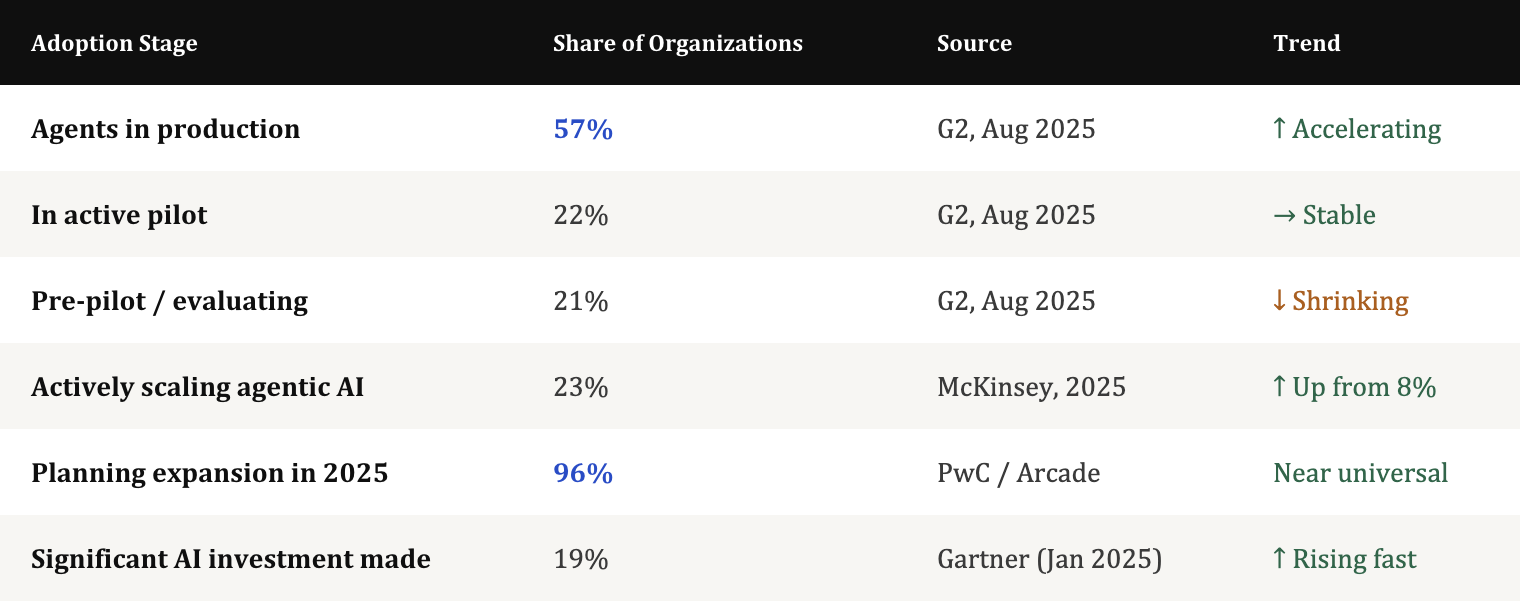

But market size tells you about supply. Adoption data tells you about demand, and the demand signal is unmistakable.

The industry concentration is equally telling: 70% of all proof-of-concept deployments come from just three sectors like banking and financial services, retail, and manufacturing. And across all four major verticals, software development is consistently a top three use case.

Gartner's projections crystallize the scale of what's coming: 33% of all enterprise software applications will include embedded agentic AI by 2028, which is up from less than 1% in 2024. That's a 33-fold increase in four years. And by that same year, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from 0% today.

Gartner Macro Projection - Gartner's best-case scenario estimates agentic AI could drive approximately 30% of enterprise application software revenue by 2035, surpassing $450 billion; up from just 2% in 2025. Organizations building agentic foundations today are positioning for that wave.

Code became AI's first true "killer use case." According to Menlo Ventures' 2025 State of Generative AI report, 50% of developers now use AI coding tools daily, rising to 65% in top-quartile organizations. The AI coding tools market exploded from $550 million to $4 billion in 2025 alone, driven by a decisive shift in capability: models can now interpret entire codebases and execute multi-step tasks, not just autocomplete a single function.

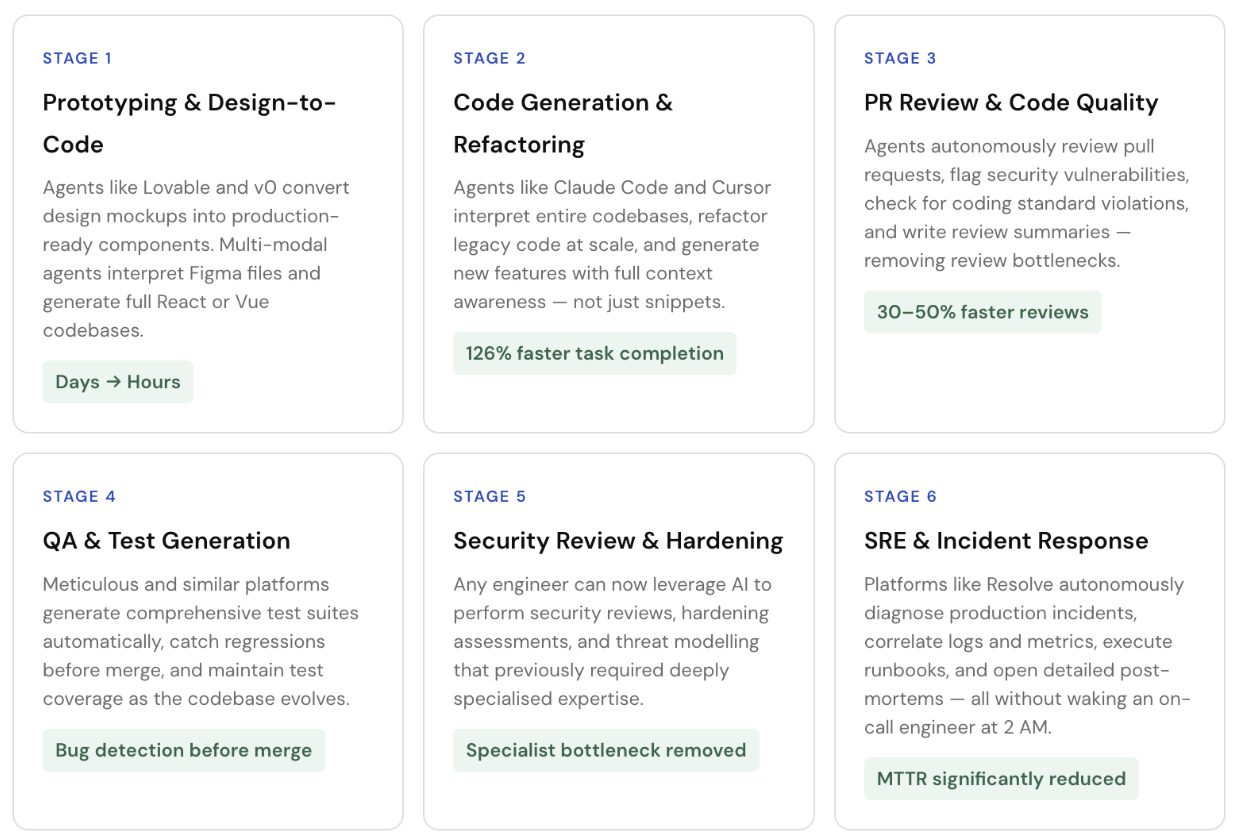

Teams report 15%+ velocity gains when AI agents are integrated across the SDLC. Individual developer productivity, measured by task completion speed, has increased by 126% in AI-assisted environments, according to Master of Code's 2025 research. But the bigger transformation isn't speed, but it's scope.

Agentic AI Across the Entire Software Development Lifecycle

Enterprise development teams face a key architectural decision: single-agent workflows that process tasks sequentially through one context window, versus multi-agent systems where an orchestrator coordinates specialized agents working in parallel.

Sequential Processing

One agent handles the full task sequentially, good for bounded, well-defined tasks where context needs to remain unified, and the risk of coordination overhead is high.

An orchestrator delegates subtasks to specialized agents running in parallel, then synthesizes results. Faster for complex tasks; requires governance to avoid cascading failures.

Reality Check: True Agents Are Still Rare

Despite the hype, Menlo Ventures found that only 16% of enterprise and 27% of startup deployments qualify as true agentic systems, where an LLM plans actions, observes feedback, and adapts. Most "AI agents" in production today are sophisticated fixed-sequence workflows. That's still valuable, but it's important to set realistic expectations when evaluating vendor's claims.

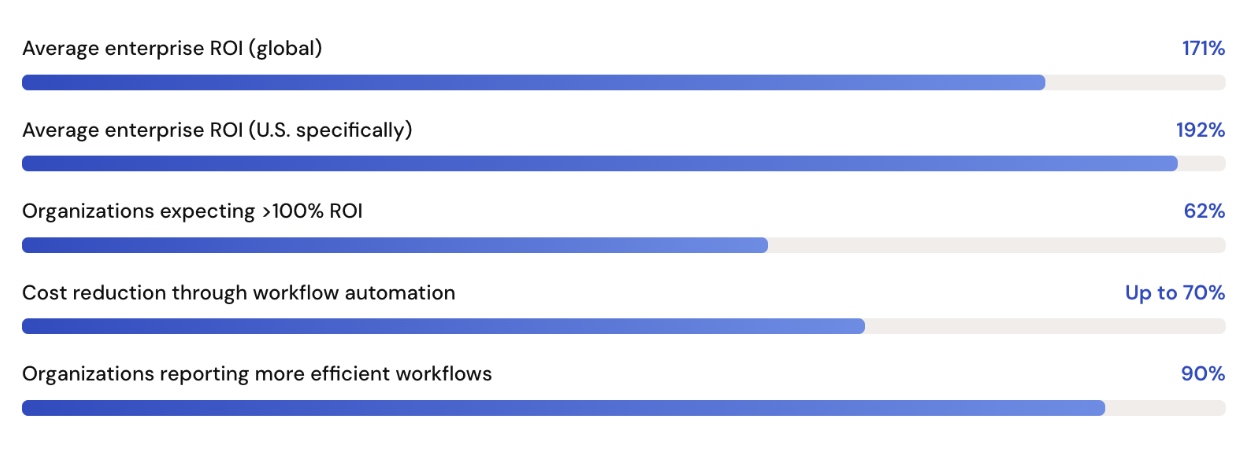

The ROI Case Is Compelling

The financial argument for agentic AI in enterprise software development is increasingly well-evidenced. Organizations across the board report strong returns, not just in projected figures, but in measured outcomes from live deployments.

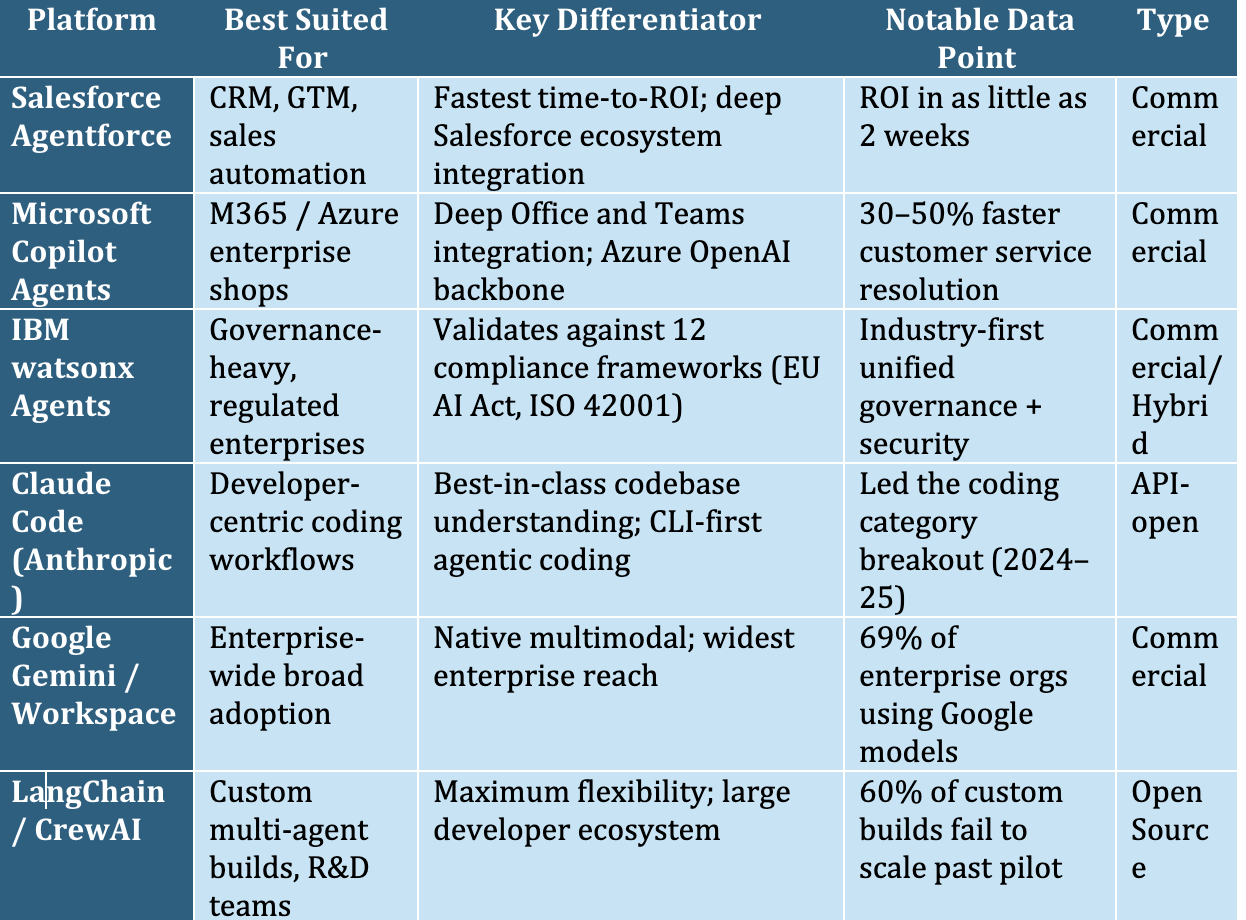

AI-enabled workflows have tripled in profit contribution: from 2.4% in 2022, to 3.6% in 2023, to 7.7% in 2024. Top-performing organizations, those with formal AI governance strategies achieve up to 18% ROI, well above typical cost-of-capital thresholds. Salesforce Agentforce customers report ROI in as little as two weeks in some deployment scenarios.

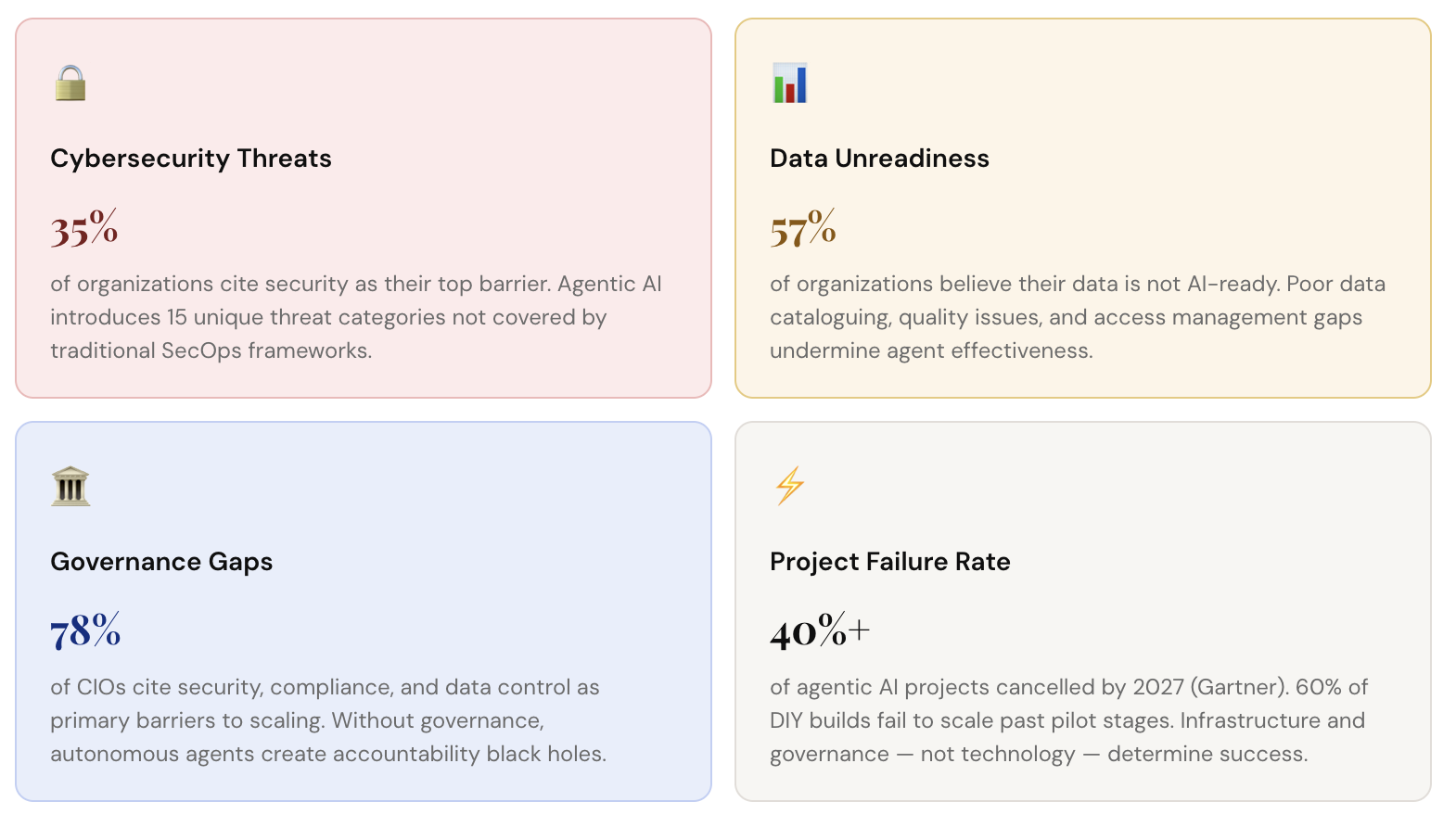

The Risks Are Equally Real

Enterprise leaders should approach agentic AI with optimism tempered by operational honesty. Gartner's landmark prediction that over 40% of agentic AI projects will be cancelled by the end of 2027 is not a pessimistic outlier. It's a warning rooted in observable patterns: runaway costs, unclear business value, and inadequate risk controls.

The enterprise agentic AI platform market is consolidating quickly. Commercial platforms are winning speed-to-ROI; open-source alternatives are winning customization and control. Understanding the landscape is critical before committing to a build-or-buy strategy.

The build-vs-buy decision is less about technology and more about your organization's operational context. Consider three factors: speed-to-value (commercial wins), customization depth (open-source wins), and governance overhead (managed platforms win decisively for regulated industries).

For most enterprise development teams, a hybrid approach is optimal: a commercial MCP-compatible platform as the orchestration layer, with custom agents built on top for organization-specific workflows. MCP (Model Context Protocol) compatibility ensures your investment isn't locked to a single vendor as the ecosystem evolves.

The governance gap is the single biggest differentiator between successful enterprise agentic AI deployments and expensive failures. IBM's research is unequivocal: organizations with formal AI governance strategies achieve an 80% success rate in AI adoption. Those without formal strategies succeed only 37% of the time.

Layer 1: Outermost

Environmental Governance

Regulatory compliance (EU AI Act, GDPR, sector-specific rules), industry standards (ISO 42001), and the external accountability structures within which AI systems must operate. Sets the non-negotiable boundaries.

Layer 2: Organizational

Organizational Governance

Internal policies for AI use, cross-functional accountability committees, role-based access controls, compliance auditing workflows, and escalation paths when agents behave unexpectedly. IBM's watsonx.governance provides tooling for this layer.

Layer 3: Innermost

AI System Governance

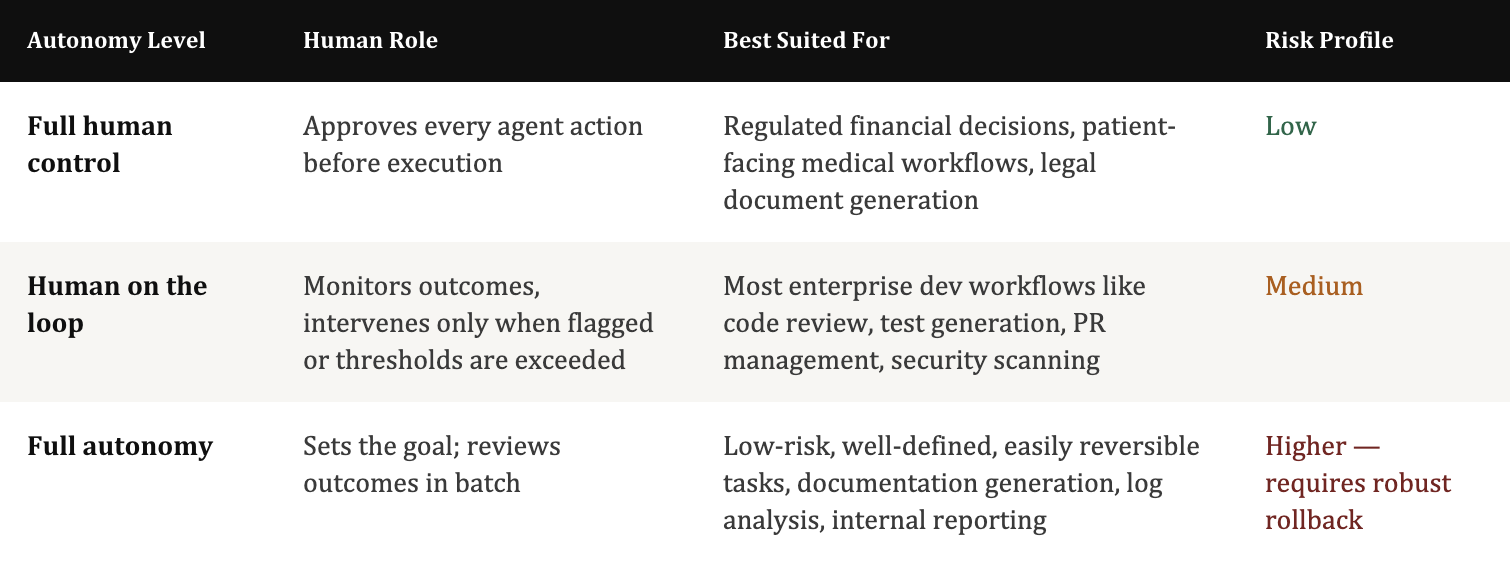

Per-agent explainability requirements, action logging and audit trails, output validation gates, human review checkpoints for high-stakes decisions, and technical resilience safeguards. This is where the "human-in-the-loop" design is operationalized.

The EU AI Act, the world's first comprehensive AI regulation, is now in force and applies to enterprise AI systems deployed in Europe. High-risk AI applications (including those affecting employment decisions, critical infrastructure, and law enforcement) face strict transparency, explainability, and auditability requirements. U.S. federal frameworks are evolving rapidly. Enterprise teams building agentic systems today should architect for explainability from day one, not as a retrofit.

Based on patterns from successful enterprise deployments and the failure modes of the ones that didn't make it, here is the implementation sequence that consistently produces results.

Governance isn't a constraint on innovation; it's what enables you to move fast without catastrophic missteps. Define accountability structures, compliance checkpoints, explainability requirements, and human escalation paths before your first production deployment. IBM's research shows that formal AI strategies raise success rates from 37% to 80%. Build the governance framework first; everything else flows from it.

57% of organizations admit their data isn't AI-ready, and agents are only as good as the data they can access and reason over. Before deploying, audit your data for completeness, quality, accessibility, and cataloguing. Fix access management so agents can reach what they need without security gaps. Implement data contracts where cross-team data sharing is involved. This step consistently determines whether pilots scale or stall.

Resist the temptation to boil the ocean. The most successful enterprise teams choose one use case with clear, measurable success metrics like cycle time, error rate, escalation frequency, and get it to production. Code review automation, QA test generation, and security scanning are ideal first candidates: they're verifiable (humans can audit outputs), bounded (limited blast radius if something goes wrong), and impactful (immediate developer time savings). Once one-use case is proven, scaling becomes a process replication exercise.

Model Context Protocol (MCP) is rapidly emerging as the interoperability standard for agentic AI, enabling different agents, models, and tools to communicate in a standardized way. Choosing MCP-compatible platforms now protects your investment as the ecosystem evolves. It also enables the hybrid multi-agent architectures that 66.4% of the market is converging on, where specialized agents from different vendors can collaborate under a unified orchestrator. Vendor lock-in is the silent killer of long-term AI programmes; MCP compatibility is the antidote.

The right autonomy level is not a one-time decision; it's a moving target as models improve and your team builds confidence in specific workflows. Start conservative: human-on-the-loop for most development tasks. As you accumulate data on agent reliability in specific contexts, you can dial autonomy up deliberately. Set clear thresholds (e.g., "the agent can auto-merge PRs under 200 lines that pass all CI checks and have zero security flags, anything outside those bounds escalates to a human reviewer") and enforce them programmatically, not just through policy documents.

Ready to Move from Insight to Action?

Talk to Hexaview's Agentic AI Experts

Our enterprise AI team has helped organizations across financial services, manufacturing, and technology design, govern, and deploy agentic AI systems that deliver measurable results, not just pilots.

Agentic AI refers to autonomous systems that can plan and complete multi-step tasks like coding, testing, and deployment with minimal human input.

Unlike tools like GitHub Copilot, agentic AI works goal-first and can execute entire workflows independently instead of just suggesting code.

Financial services, healthcare, manufacturing, and retail are leading adopters due to their complex software workflows.

Key risks include governance gaps, data readiness issues, cybersecurity threats, and unclear ROI measurement.

Enterprises report over 100% ROI on average, with faster development cycles and improved productivity.

Yes, but mainly for specific tasks like testing, code review, and documentation rather than full automation.

MCP (Model Context Protocol) enables AI agents to connect with tools and systems, ensuring flexibility and avoiding vendor lock-in.

Track productivity, quality, efficiency, and business impact metrics before and after implementation.